The Promises and Perils of AI in Education

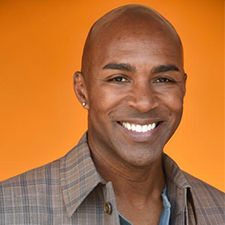

A conversation with author Ken Shelton

Educator, Keynote Speaker,

and Author

Artificial intelligence has exploded into the education landscape with equal parts promise and anxiety. The discourse is loud, but too often it skims the surface—focused on shortcuts, efficiency hacks, and the next shiny tool instead of the deeper literacy, ethics, and human-centered leadership required to use AI well. Few voices cut through that noise with more clarity and perspective than Ken Shelton.

A longtime educator, keynote speaker, and co-author of “The Promises and Perils of AI in Education,” Ken has spent more than two decades at the intersection of learning, technology, equity, and systems change. He challenges the “microwave meal” mentality dominating today’s AI conversations and pushes schools toward intentional growth—where acumen, fluency, and bias awareness matter more than tips and tricks.

You say most conversations about AI in education are focused on efficiency instead of literacy and understanding. What does true AI literacy look like in schools?

If you look at the history of technology in education, you tend to see three camps. You’ve got the evangelists who will tinker, test, and play with every new tool. You’ve got the resisters, who aren’t really anti-tech—they’re anti-change. And then you’ve got the folks in the middle who sway with whichever way the wind is blowing.

Most of what we’re doing with AI right now sits at the “tips, tricks, and how-to” level. It’s like teaching people how to heat up a microwave meal. You push a few buttons, a few minutes later you’re fed, and you convince yourself, “Hey, this works.” But long-term, a diet of microwave meals isn’t healthy.

True AI literacy is teaching people how to shop for ingredients, how to cook, how the equipment works, how flavors interact, and how different methods—baking, roasting, grilling—change the outcome. Translated to AI, that means digging into acumen, fluency, bias awareness, ethics, and context. It takes more time and intentionality, but it centers agency and autonomy.

Right now, a lot of professional learning is the microwave version of AI. I’m pushing for thoughtful, sustainable professional development that builds foundational understanding so educators aren’t stuck when the current “favorite tool” disappears.

“You can’t microwave relationships. You can use AI, but you can’t skip the work of knowing your students.”

-Ken Shelton

As the author of “Promises and Perils of AI in Education,” what worries you most about the “shortcut culture” emerging around AI, especially for the next generation?

The “promise” side is real: Students and educators can develop powerful workflows, streamline some tasks, and free up time. But the “peril” is the unconscious overreliance that creeps in when we only operate at the tips-and-tricks level.

If your AI learning is reduced to “What’s the easiest tool?” you get comfortable with a narrow set of platforms and depend on them. Education has been here before: We fall in love with a tool, build everything around it, and three years later it’s gone. If you don’t have foundational skills, you’re stuck.

I think of it like literacy. When my students learned different documentary storytelling structures, it didn’t matter whether they used a phone camera, a traditional camera, or—today—an AI video generator. The medium changes, but the storytelling fundamentals are evergreen.

With AI, our job is to help learners build those malleable, transferable skills—critical thinking, ethical reasoning, understanding bias—so they can navigate whatever platforms come and go.

How do you see technology—especially AI—impacting our humanness?

You’re right to worry about that. Technology can absolutely compromise our interpersonal relationships when we’re not consciously aware of how we’re using it.

My stance is “human-centered leadership.” Before I use AI for something like “personalized learning,” I need to actually know the person. The relationship comes first; the tech should augment, amplify, or accelerate what we’re doing for that learner—not replace it. You can’t microwave relationships. You can use AI, but you can’t skip the work of knowing your students.

And how do we close that gap between AI policy and practice in a way that protects equity and innovation?

One of the biggest gaps is that a lot of systems are stuck at “policy” when what they really need is guidance. Policies often get distilled into binaries—do this, don’t do that.

Equity gets compromised when we flood classrooms with “high-volume tech for low-order thinking.” If the only message is “Don’t let AI write your essay,” but we fail to teach students how to use it ethically, we’re not building capacity—we’re just policing behavior.

That’s why I focus on guidance and ethical leadership: asking students questions like, “At what point is AI supporting your learning, and at what point is it replacing your thinking?” or “What long-term benefits am I sacrificing for this short-term gain?”

You can’t capture that in a simple policy. You build it through ongoing conversations with educators and students, embedded into digital citizenship, tech use, and broader learning goals.

Looking ahead three to five years, what’s your forecast for AI in education?

I think a lot of the AI-in-education companies you see today won’t exist. The market will consolidate, and you’ll see a reorientation back to familiar patterns.

Every time a disruptive technology appears, there’s a burst of innovation, and then things snap back toward the status quo. I reference the line from The Who: “Meet the new boss, same as the old boss.” That’s where I think we’ll land in many places.

We’ll see more AI tools in classrooms, but not necessarily a deep, systemic transformation of practice. And the equity pattern will repeat: many of the “haves” will have even more opportunity, and the “have-nots” will be further behind unless we’re intentional.

So what does that “top 20%” of future-ready leadership look like—the ones who don’t snap back to business as usual?

They’re mission-driven, not tool-driven. They’re clear on the experiences they want learners to have and align resources, policies, and professional growth around that vision.

They also think systemically about capacity-building. For example, if teacher prep programs and graduate programs thoughtfully embed AI—ethics, equity, fluency, not just tools—you start changing the system upstream. The leaders who prioritize innovation over fear, who see change as an opportunity rather than a threat, and who center human dignity and equity in their decisions—that’s the group that will flourish. My hope is that we can grow that group beyond 20%.